Internet Development History Explained

Learn about how the internet was developed and where it's headed next.

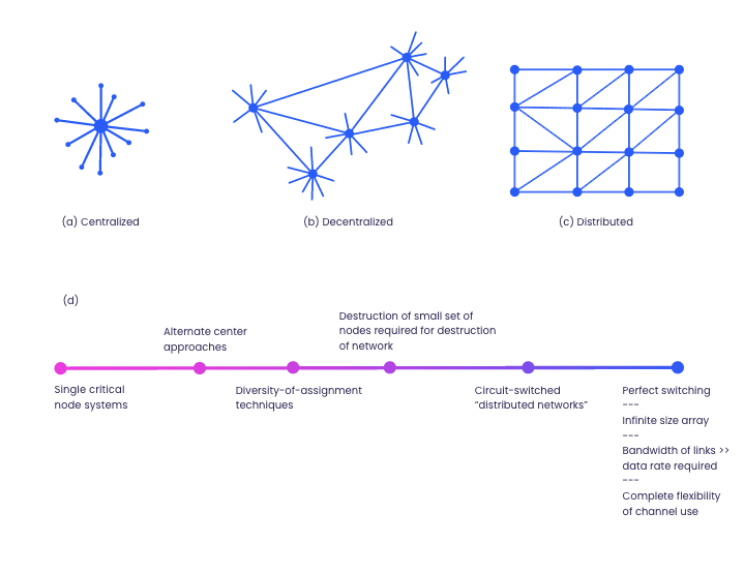

As part of the Pentagon’s apparatus against a possible Soviet nuclear attack, military planners saw it fit to set up a decentralized communication network that cannot be rendered ineffective in case of a missile attack. The significant implications of centralized networks are that, since they need centralized switching facilities, they are necessarily easier targets. As such, the military wanted to use redundancy to build communications systems that could endure the aftermath of a nuclear attack.

The solution came from the RAND Corporation, redundant, distributed, and survivable communication networks. Paul Baran proposed it in his seminal work “On Distributed Communications” and “Digital packet switching,” published between 1960 and 1962.

The idea behind Paul Baran’s concept was simple; to set up a series of nodes where all the nodes in the network would be linked to each other and have equal status to all other nodes. That essentially means that each node had to have the ability to originate, propagate, and receive messages. It would also address a centralized network’s security risk since the distributed network would remain functional despite having some inoperable nodes.

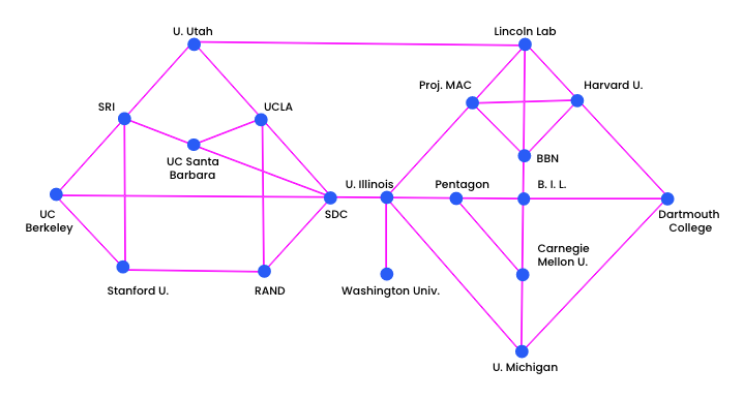

This “distributed” concept would later be extensively deployed by scientists and researchers who wanted to share computing resources remotely through the ARPANET network (ARPANET network).

At that time, ARPANET’s primary purpose was to link computers used for Pentagon-funded research projects like Santa Monica’s UC Berkeley’s Genie Project and MIT’s Compatible Time-Sharing System project. Thus, by December 1969, ARPANET was made up of several nodes stretching from California to Salt Lake City.

At the heart of this project was the US Department of Defense and its research and development agency, ARPA (Advanced Research Projects Agency), which financed this decentralized network building.

It was made possible by Joseph Carl Robnett Licklider’s pioneering work, who was at the helm of the Information Processing Techniques Office (IPTO) at ARPA. His papers, titled “The Computer as a Communication Device” (coauthored with Robert Taylor) and “Man-Computer Symbiosis,” illustrated his vision of network applications and predicted the use of computer networks for communications through the schematics of what he called an “Intergalactic Network”.

That was a revolutionary idea since computers were only conceived as calculating devices. The fact that J.C.R. Licklider emphasized in his paper when he argued that “… computers are designed primarily to solve preformulated problems or to process data according to predetermined procedures”.

Internet penetration

Even so, in the 1970s and the 1980s, many computer scientists and engineers would introduce networking protocols to ARPANET topology. Among them were Robert Kahn, and Vinton Cerf, who developed the Transmission Control Protocol and the Internet Protocol (TCP/IP), which defined how data streams could be exchanged between machines.

Before these protocols, computer networks did not have a standard way to communicate with each other, which is why the 1980s could be considered as the era that paved the way for the growth of the internet as we know it today, since a universal networking language could now connect all networks.

To promote the adoption of TCP and IP protocols, DARPA subsidized its costs, which is how a universal networking language could connect various American research institutions and universities by the late 1980s.

Another milestone achieved in the 1980s was the invention of the Domain Name System (DNS). It was created in 1983 by Paul Mockapetris. DNS was essentially the phonebook of the internet since it facilitated the retrieval of IP addresses where instead of looking up hostnames, DNS created easily understandable and identifiable names for IP addresses.

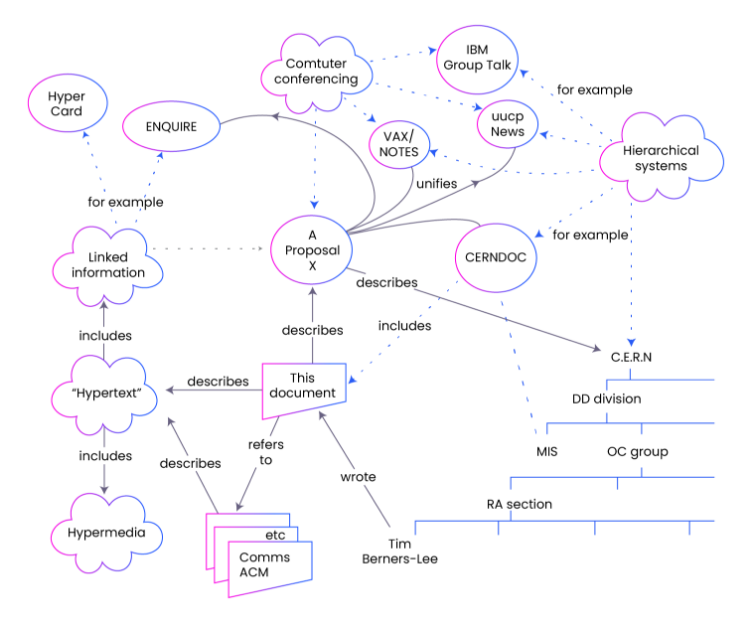

In 1989, scientists Tim Berners, from the European Center for Nuclear Research (CERN), and Robert Cailliau created the World Wide Web.

Their work paved the way in creating a global information system since its essential idea was to use internet technologies and personal computers to access webpages, files, and electronic documents residing on servers linked across the globe.

The tremendous expansion of the internet we are now witnessing today was mostly due in part to the ripple effect of a web of hypertext documents that are rendered visible by browsers.

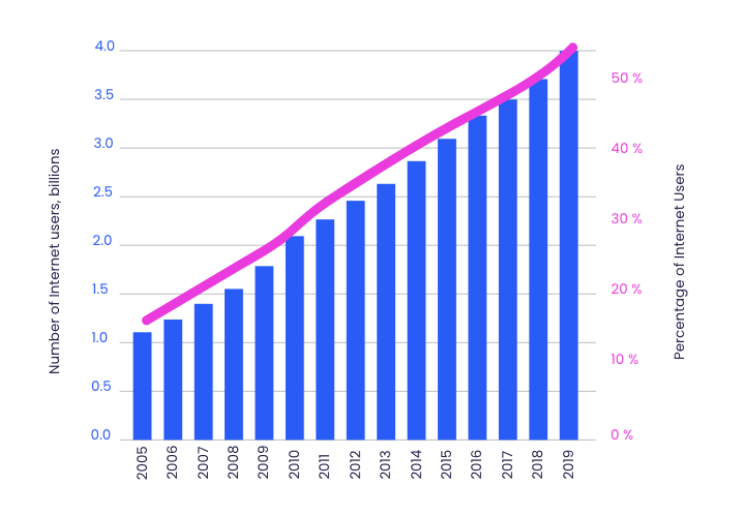

At this stage, the internet saw a massive uptake from the public through the commercialization of the internet by the first internet service providers (ISPs). The number of host servers, internet browsers, and search engines grew exponentially from the 1990s to the early 2000s.

Today, the International Telecommunication Union (ITU) database found that as of 2019, more than 51 percent of the global population of 4 billion people were using the internet.

IANA’s policy of allocation of IPv4 blocks

The Internet Protocol plays a vital role in assigning a device to a network. It connects modern computer networks by making sure that messages sent from one computer to another get to the right recipient.

Since the internet is a packet-switching network, the Internet Protocol thus plays a considerable role in ensuring that data packets reach their recipient.

IPv4 was the first official version of Internet Protocol. It has 32 bits of host addresses meaning that the address space of IPV4 has about four billion possible proxies. To date, it is the primary Internet Protocol and carries 94% of internet traffic.

The Internet Assigned Numbers Authority (IANA), a component of ICANN (Internet Corporation for Assigned Names and Numbers), has an essential administrative role in regulating the internet.

It is responsible for registering and allocating domain names, IP addresses, and other names and numbers used by internet protocols.

IANA coordinates the global pool of IP numbers and autonomous system numbers (ASNs), assigned in blocks of addresses to the five Regional Internet Registries (RIRs). In turn, the RIRs make smaller blocks of addresses available to the respective local registries (LIRs) and national registries (NIRs), which then pass them on to internet service providers.

IPv4 shortage and the deployment of IPv6

As a reminder, there are only 4.2 billion IPv4 addresses. That is because, at the time of the transition to TCP/IP in 1983, nobody would have predicted the explosion of internet users like we have today.

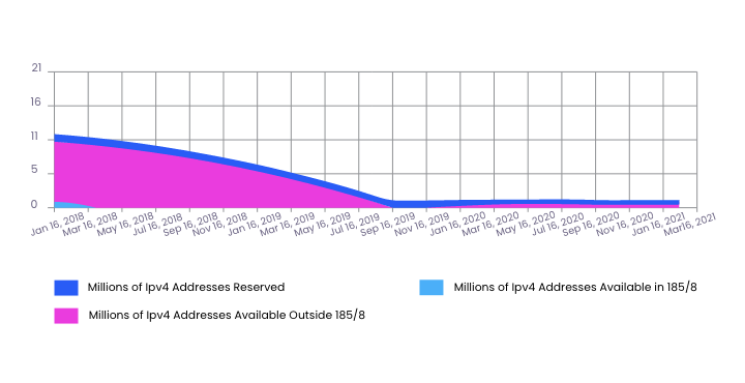

That should not come as a surprise. Since 2012, RIRs like RIPE NCC received their final allocation of IP addresses from IANA.

In recent years, this scarcity has fueled a significant secondary market for second-hand IPv4 addresses, which has led to the exponential growth of the IPv4 forwarding market. Nevertheless, some regions of the world are affected by this shortage more than others.

The IPv6 address system was developed to solve the problem of the depletion of IPv4 addresses. Clearly, without large-scale IPv6 deployment, we risk heading into a future where our internet’s growth will be unnecessarily constrained. That has been occasioned by the astronomical growth in the number of internet users and the proliferation of mobile and permanently connected devices, increasing the demand for addresses.

With the gradual decrease of public IPv4 addresses available, the transition to IPV6 becomes inevitable since it’s the standard length of IPv6 defines addresses on 128 bits instead of 32. That makes IPv6 practically inexhaustible because it allows the generation of more than 667 billion unique combinations.

However, few users have implemented the use of IPv6 addresses. Consequently, the deployment of the IPv6 solution is only practical if there is a massive uptake. But this is hindered by compatibility issues. Chief among them, the format of an IPv6 datagram is incompatible with that of IPv4.

In essence, a router configured on an IPv4 interface cannot analyze IPv6 packets of information because an IPv6 address is much more complicated than an IPv4. Beyond having 128 bits, IPv6 addresses are written in hexadecimal format and separated by a colon divided along 16-bit boundaries.

Despite this, IPV6 has undeniable benefits over IPV4.

Of prime importance is the fact that IPv6 also supports multicast address protocols. That allows larger packets of data to be sent to multiple destinations simultaneously, ideal for audio and video streaming broadcasts to numerous recipients.

Additionally, IPv6 has been designed with security features that IPv4 does not have. Indeed, the design of IPv6 incorporates more robust security protocols as intrinsic elements that IPV4 did not have when it was conceived.

Dot-com bubble

The dot-com bubble caused the disappearance of a myriad of companies mainly related to the IT sector. As an emerging sector of the economy, these companies caused a stock market shock wave that shook the major global financial centers.

Buoyed by the emergence of numerous start-ups created by enterprising young computer scientists and a low inflationary environment where interest rates were meager, made investors were more attracted to the dot-com sector.

In 1995, Netscape’s IPO started this irrational craze of IPOs in the so-called dot-com sector. Its shares were offered at $28 per share before rising to $71 on the same day. This “irrational exuberance,” as Alan Greenspan called it, made investors blindly buy out small IT companies and embark on a wave of mergers to strike gold.

However, the euphoria around this new economy would eventually dampen when it became apparent that some of the dot-com companies were grossly overvalued.

Analysts attributed the cause of the dot-com bubble to two main factors. First, investors did not understand internet firms’ underlying economics, which was why the market was very irrational at that time.

Moreover, traditional accounting methods were ill-equipped to build tailor-made statistical models capable of projecting future cash earnings for dot-com companies. This is why there was a massive gap between the share price and actual earnings of most of these companies.

Technologies of the internet today

The internet has inarguably spurred various innovations. These include multiple protocols like; HTTP, DNS, POP, TCP, FTP, and SMTP, which have made the internet the crucial technology of the information age.

In terms of infrastructural innovation, the internet was pivotal in developing technologies like; internet satellites, ADSL, WiMAX, Optical Fiber Technology, 5G, 3G, 4G & LTE.

Leveraging telecommunication infrastructure that delivers high-speed internet speeds, other internet-based inventions became possible. Among them are Internet radio and Voice over IP protocol (VoIP).

Internet abuse

As IPv4 protocols were adopted, they immediately presented security concerns on account of various routing system vulnerabilities. These include IP spoofing, prefix hacking, and session stealing.

These propagation and resilience failures represent a massive threat to the internet’s reliability, which is why a wide range of counter mechanisms like packet filtering and intrusion detection are deployed continuously.

Structure of the internet governance

One of the biggest hurdles that slowed the uptake of the internet was the standardization of its protocols and address space management.

Yet, even though the internet is not centrally managed and globally coordinated, various Task Forces, nonprofit organizations, and Tier 1 internet service providers have been working closely to maintain the integrity of the internet.

Among them is IANA, which sits at the helm of the global policy to allocate IPv4 blocks to the Regional Internet Registries. IANA stipulates the policy governing the allocation of IPv4 address space drawn from the Unallocated Address Number Pool. They also maintain a comprehensive registry of the unallocated address pool and the unassigned pool of RIRs’ address blocks. That subsequently allows them to generate a predictive model that can be used to estimate when these blocks will be exhausted.

About the author

Table of contents

Internet penetration

IANA’s policy of allocation of IPv4 blocks

IPv4 shortage and the deployment of IPv6

Dot-com bubble

Technologies of the internet today

Internet abuse

Structure of the internet governance

Related reading

Open Internet and IP Address Management

Embrace the Open Internet's principles and navigate the evolving landscape of IP address management. Discover how we're adapting to new realities at IPXO, empowering you with access to IP…

Read more

The Evolution of Route Origin Authorization: Insights from IPXO’s Half-Year Journey

Discover the transformative power of Route Origin Authorization and fortify your network's security and efficiency with valuable insights from IPXO's mid-2023 journey.

Read more

What Is More Energy-Efficient: IPv4 or IPv6?

The transition to IPv6 holds the potential to reduce global energy consumption and foster a sustainable future in networking. However, the slow adoption rates of IPv6 have prompted alternative…

Read moreSubscribe to the IPXO email and don’t miss any news!